Ever found yourself wondering if ‘Edge AI’ is just another tech buzzword, or if it truly holds the key to the future? Well, if that thought has crossed your mind, you’re on the right track! This isn’t just jargon; it’s a fundamental shift in how artificial intelligence operates, propelling us into an era where intelligence lives closer to the action, right where your data is born.

Imagine your everyday smart devices – from the smartphone in your pocket to the security camera watching over your home, or even the complex sensors in a sprawling industrial plant – suddenly gaining the ability to make incredibly smart decisions *on the spot*. No need to ship every single byte of data all the way to a distant cloud server. That, in essence, is the magic of Edge AI. It’s about decentralizing intelligence, giving these devices their own localized ‘brain’.

This paradigm shift brings with it a cascade of benefits: lightning-fast responses crucial for real-time applications, enhanced privacy as sensitive data remains local and doesn’t traverse the internet, and massive efficiency gains by significantly cutting down on bandwidth consumption. Think of a security camera powered by Edge AI: instead of continuously streaming raw video footage to the cloud, it might only send an alert when it detects something unusual, like an unrecognized face or a package left at your door. It’s undeniably smarter, significantly faster, and inherently more secure.

To truly grasp this concept, sometimes seeing (or hearing) it explained can make all the difference. Here’s a quick explainer we put together that distills the essence of Edge AI into a captivating few seconds:

Table of Contents

What is Edge AI? A Closer Look at On-Device Intelligence

At its core, Edge AI refers to the deployment of artificial intelligence algorithms and machine learning models directly onto “edge devices.” These are computing devices located at or near the source of data generation, rather than relying on a centralized cloud server or data center for processing. The “edge” can be almost anything: a smartphone, a smart speaker, a surveillance camera, a factory robot, a self-driving car, or even a tiny sensor in a remote location.

Traditionally, AI processing required vast computational power, typically housed in massive data centers. Data would be collected at the edge, transmitted to the cloud, processed, and then insights would be sent back. While effective for many applications, this model introduced inherent limitations. Edge AI flips this script by moving the computational “brain” to the periphery of the network, minimizing the distance data has to travel for analysis.

The ‘Why’ Behind Edge AI: Unveiling Its Core Advantages

The rise of Edge AI isn’t merely a technological fad; it’s a strategic response to several critical challenges faced by traditional cloud-centric AI. Understanding these drivers illuminates why Edge AI is not just beneficial, but often essential.

1. Real-time Responsiveness and Ultra-Low Latency

For applications where instantaneous decisions are paramount, sending data to the cloud and waiting for a response is simply not feasible. Think about autonomous vehicles needing to detect an obstacle and react within milliseconds, or a robotic arm in a factory needing to identify a defect and halt production immediately. Edge AI enables this real-time processing by eliminating the network delay (latency) associated with cloud communication. The ‘brain’ is on-site, allowing for immediate action based on local data.

2. Enhanced Data Privacy and Security

In an era of increasing data privacy concerns (GDPR, CCPA, etc.), keeping sensitive information local is a significant advantage. With Edge AI, raw data, especially personal or proprietary operational data, doesn’t need to leave the device or local network. This dramatically reduces the risk of data breaches during transit or storage in centralized cloud servers. For instance, a smart camera performing facial recognition can process faces locally, identifying known individuals without ever sending their image data to a remote server.

3. Significant Bandwidth and Cost Efficiency

Transmitting vast amounts of raw data, like continuous high-definition video feeds or massive sensor logs, to the cloud is incredibly bandwidth-intensive and can incur substantial costs. Edge AI acts as a smart filter. It processes data locally, extracting only the most critical insights or anomalies, and then sends only this much smaller, pre-processed information to the cloud (if needed at all). This drastically reduces bandwidth requirements, lowering operational costs, and making AI viable in areas with limited or intermittent connectivity.

4. Improved Reliability and Offline Operation

Cloud connectivity isn’t always guaranteed. In remote areas, during network outages, or in critical industrial environments, constant internet access can be unreliable. Edge AI systems can continue to function autonomously even without a connection to the cloud, ensuring uninterrupted operation. This local resilience is vital for mission-critical applications where downtime is unacceptable.

How Edge AI Works: Bringing the Brain Closer to the Action

The operational mechanics of Edge AI revolve around equipping devices with the necessary intelligence to perform inference locally. Here’s a breakdown:

1. Optimized Machine Learning Models

Unlike cloud-based models that can be massive, Edge AI models are specifically designed to be lightweight and efficient. This involves techniques like model quantization (reducing the precision of model weights), pruning (removing redundant connections), and distillation (training a smaller model to mimic a larger one) to make them fit within the constrained memory and processing power of edge devices. These optimized models are then deployed to the devices.

2. Specialized Edge Hardware

Running complex AI tasks on small devices requires specialized hardware. This includes:

- AI Accelerators: Dedicated chips (like NPUs – Neural Processing Units, TPUs – Tensor Processing Units, or specialized GPUs) designed to efficiently execute AI computations.

- System-on-Chips (SoCs): Integrated circuits that combine all necessary components (CPU, GPU, memory, AI accelerator) into a single chip, optimizing for size, power, and cost.

- Low-Power Processors: CPUs and microcontrollers optimized for minimal power consumption, crucial for battery-powered or remote edge devices.

3. On-Device Inference

Once the optimized model is deployed and the device is collecting data from its sensors (cameras, microphones, temperature sensors, etc.), the Edge AI system performs inference locally. Inference is the process of using a trained machine learning model to make predictions or decisions on new, unseen data. For example, a security camera identifies a human silhouette, or a factory sensor detects an anomaly in machine vibrations.

4. Selective Data Transmission (or None at All)

Based on the local inference, the edge device decides what data, if any, needs to be sent upstream. This could be:

- An alert or anomaly detected.

- Aggregated insights or summary statistics, not raw data.

- Data for periodic model retraining (federated learning often plays a role here).

- Or, in many cases, no data needs to leave the device at all, ensuring maximum privacy and minimal bandwidth usage.

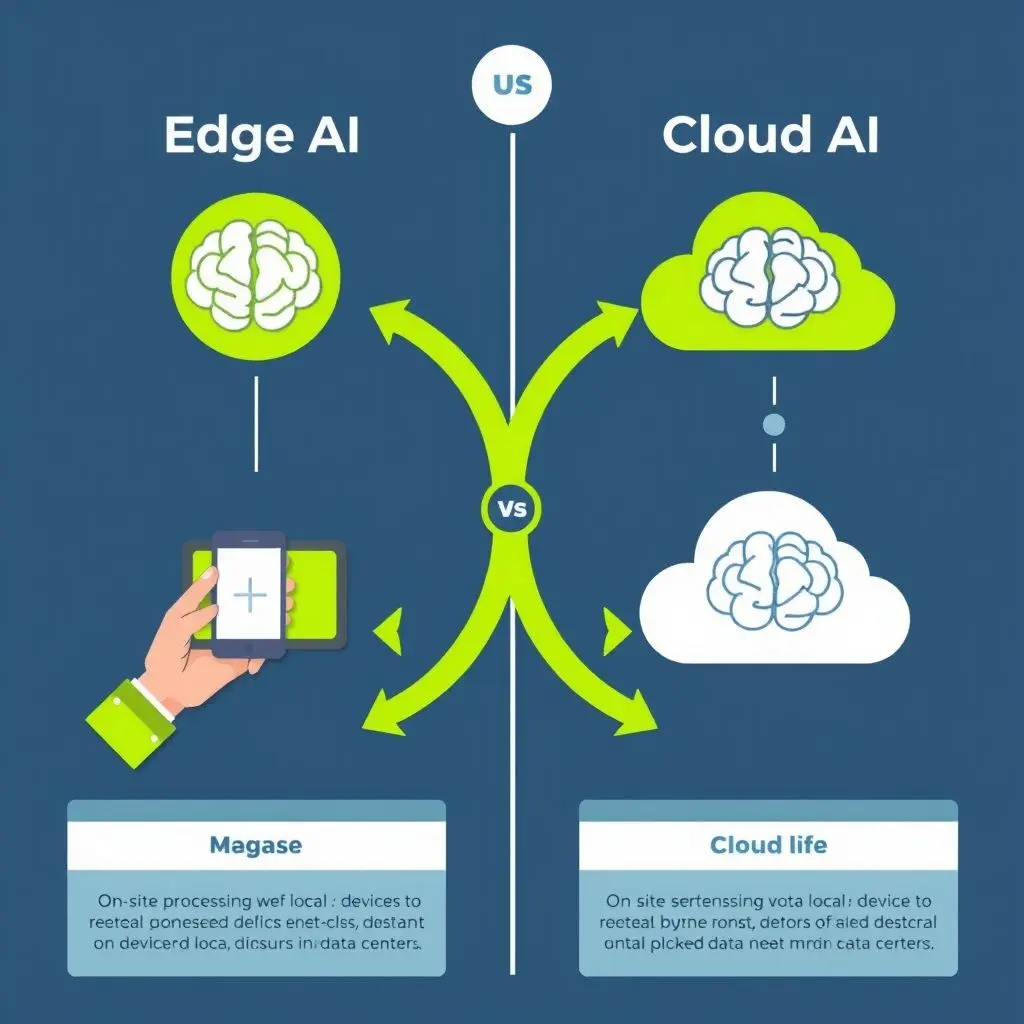

Edge AI vs. Cloud AI: A Symbiotic Relationship, Not a Rivalry

It’s crucial to understand that Edge AI isn’t meant to entirely replace cloud AI. Instead, they represent complementary approaches, often working in tandem to create powerful, hybrid AI solutions. The choice between them, or the optimal blend, depends heavily on the specific application’s requirements.

| Feature | Edge AI | Cloud AI |

|---|---|---|

| Processing Location | On or near the data source (e.g., device, local server) | Centralized data centers |

| Latency | Very Low (real-time or near real-time) | Higher (due to network travel time) |

| Bandwidth Usage | Low (only send critical insights/metadata) | High (often raw data needs to be transmitted) |

| Data Privacy/Security | High (data stays local) | Moderate (data in transit & at rest in cloud) |

| Computational Power | Limited (optimized, specialized hardware) | Virtually limitless (scalable servers) |

| Model Complexity | Optimized, simpler models | Complex, larger models (for deep learning, extensive training) |

| Reliability | High (operates offline) | Dependent on network connectivity |

| Primary Use Case | Real-time inference, immediate action, privacy-centric tasks | Large-scale data analysis, model training, complex queries, historical trends |

In a hybrid model, Edge AI might handle immediate tasks and pre-process data, while the cloud handles long-term storage, complex analysis, global model retraining, and overarching management. This synergistic approach harnesses the strengths of both environments.

Applications of Edge AI: Where It’s Making an Impact

Edge AI is not just theoretical; it’s rapidly being deployed across a myriad of industries, transforming operations and user experiences. Here are some prominent examples:

1. Autonomous Vehicles

Self-driving cars are perhaps the most quintessential example. They rely on dozens of sensors (cameras, LiDAR, radar) generating terabytes of data per hour. Processing this in the cloud is impossible due to latency. Edge AI enables real-time object detection, lane keeping, collision avoidance, and navigation decisions right on the vehicle, critical for safety and responsiveness.

2. Smart Cities and Surveillance

Edge AI in smart city infrastructure can analyze traffic flow, detect illegal parking, monitor public safety, and manage waste collection more efficiently. Security cameras with on-device AI can identify suspicious activities, count people, or recognize license plates without sending all raw video footage to a central server, preserving privacy and saving bandwidth.

3. Industrial IoT (IIoT) and Manufacturing

In factories, Edge AI monitors machinery for anomalies, predicts maintenance needs (predictive maintenance), ensures quality control by detecting defects on assembly lines, and optimizes robotic operations. This reduces downtime, increases efficiency, and enhances worker safety by processing sensor data directly on the factory floor.

4. Healthcare and Wearables

Wearable devices with Edge AI can continuously monitor vital signs, detect falls, or analyze sleep patterns, providing immediate alerts for critical events without needing constant cloud connectivity. Portable diagnostic devices can perform preliminary analyses at the point of care, accelerating treatment decisions.

5. Consumer Electronics

Your smartphone uses Edge AI for features like facial recognition (Face ID), voice assistants (processing commands locally), computational photography enhancements, and on-device natural language processing. Smart speakers often process initial wake words at the edge, only sending more complex commands to the cloud. Drones use Edge AI for navigation, obstacle avoidance, and object tracking.

6. Retail and Logistics

Edge AI helps retailers optimize inventory, analyze customer foot traffic and behavior (anonymously), prevent theft, and personalize in-store experiences. In logistics, it can optimize delivery routes, monitor cold chain integrity, and enhance warehouse automation.

Challenges and Considerations for Edge AI Deployment

While the benefits are compelling, deploying Edge AI isn’t without its complexities. Overcoming these challenges is key to widespread adoption.

1. Hardware Constraints and Optimization

Edge devices typically have limited computational power, memory, storage, and battery life compared to cloud servers. Developing and optimizing AI models to run efficiently within these constraints requires specialized techniques and hardware. Power efficiency is paramount, especially for battery-powered IoT devices.

2. Model Development and Deployment

Training robust AI models often requires massive datasets and significant computational resources, usually performed in the cloud. The challenge then lies in compressing and optimizing these models for efficient inference on diverse edge hardware. Managing the lifecycle of these models – from initial deployment to updates and retraining – across potentially millions of distributed edge devices is a non-trivial task.

3. Security at the Edge

While Edge AI enhances privacy by keeping data local, it also introduces new security vulnerabilities. Edge devices are often physically accessible and can be more susceptible to tampering, theft, or unauthorized access. Ensuring the integrity of the AI models on the device and securing the data that does get transmitted requires robust security protocols, including encryption, secure boot, and tamper detection.

4. Connectivity and Orchestration

Even though Edge AI reduces reliance on constant cloud connectivity, many hybrid models still require intermittent communication for updates, aggregation of insights, or advanced analytics. Managing connectivity across diverse networks (5G, Wi-Fi, LoRaWAN) and orchestrating the interaction between edge devices, edge gateways, and the cloud adds complexity.

5. Data Governance and Compliance

Even with local processing, organizations must ensure compliance with data governance regulations. Understanding what data is processed locally, what is transmitted, how it’s stored, and who has access remains critical, especially for sensitive data.

The Future of Edge AI: Towards Ubiquitous Intelligence

The trajectory of Edge AI is one of continuous growth and innovation. As 5G networks become more prevalent, offering ultra-low latency and high bandwidth, the synergy between edge devices and the broader network will only deepen. Technologies like TinyML are pushing AI even further down to microcontrollers with mere kilobytes of memory, making intelligent sensing ubiquitous.

Federated Learning, a technique where models are trained collaboratively by multiple decentralized edge devices without exchanging raw data, promises even greater privacy and efficiency for model improvement. We can anticipate more specialized hardware, more sophisticated model optimization techniques, and increasingly robust management platforms to handle the sheer scale of future Edge AI deployments.

The vision is clear: a world where intelligence isn’t confined to distant data centers but is embedded into the fabric of our physical world, making every interaction smarter, faster, and more personal. Edge AI is not just a technology; it’s a foundational element for the next generation of intelligent systems, fundamentally altering how we interact with technology and how technology interacts with our environment.

Frequently Asked Questions About Edge AI

Q1: Is Edge AI suitable for all AI applications?

No. While Edge AI offers significant advantages for real-time, privacy-sensitive, or bandwidth-constrained applications, it’s not a universal solution. Complex AI tasks requiring massive computational power for training or highly intricate data analysis are often still best performed in the cloud. Many applications will benefit most from a hybrid approach.

Q2: How does Edge AI differ from traditional IoT?

Traditional IoT devices primarily collect data and send it to a central cloud for processing. Edge AI-enabled IoT devices, often referred to as AIoT, go a step further by processing and analyzing that data locally on the device itself, making intelligent decisions without constant cloud reliance. This adds a layer of ‘smartness’ and autonomy to IoT.

Q3: What kind of hardware is needed for Edge AI?

Edge AI can run on a wide range of hardware, from powerful embedded systems with GPUs or NPUs (Neural Processing Units) in autonomous cars to tiny microcontrollers in smart home sensors (TinyML). The specific hardware depends on the complexity of the AI model and the performance requirements of the application.

Q4: Does Edge AI eliminate the need for the cloud?

Not entirely. While Edge AI reduces reliance on the cloud for immediate processing, the cloud often remains crucial for tasks like initial model training, periodic model updates, long-term data storage, large-scale analytics, and central management of distributed edge devices. They often work together in a synergistic, hybrid architecture.

Q5: Is Edge AI more secure than cloud AI?

Edge AI generally enhances data privacy by keeping sensitive raw data local, reducing exposure during transit. However, it introduces new security challenges at the device level, such as physical tampering or securing deployed models. Cloud AI has its own set of security considerations related to data centers and network security. The security profile depends on the implementation and robustness of measures taken in both environments.

Q6: Can Edge AI learn and improve over time?

Yes. While initial model training often happens in the cloud, edge devices can be updated with newer, improved models. Additionally, advanced techniques like Federated Learning allow edge devices to collaboratively train models without sending their raw data to a central server, enabling continuous improvement while preserving privacy.

A Smarter Tomorrow, Fueled by the Edge

As we’ve explored, Edge AI is far more than just a passing trend; it’s a foundational technology that’s reshaping the landscape of artificial intelligence and its real-world applications. By bringing processing power directly to our devices, it unlocks unparalleled speed, fortifies privacy, and dramatically boosts efficiency across virtually every sector imaginable. From the rapid decisions of an autonomous vehicle to the subtle insights gleaned by a smart sensor, the ability to process data before the cloud is proving to be a game-changer.

The evolution continues, with innovations like 5G and TinyML promising to embed intelligence even deeper into our daily lives. So, the next time your smart device seems to anticipate your needs or reacts with uncanny speed, remember the powerful, local brain working at its ‘edge’ – quietly, efficiently, and securely crafting a smarter future. It’s an exciting time to be witnessing, and participating in, this intelligence revolution.