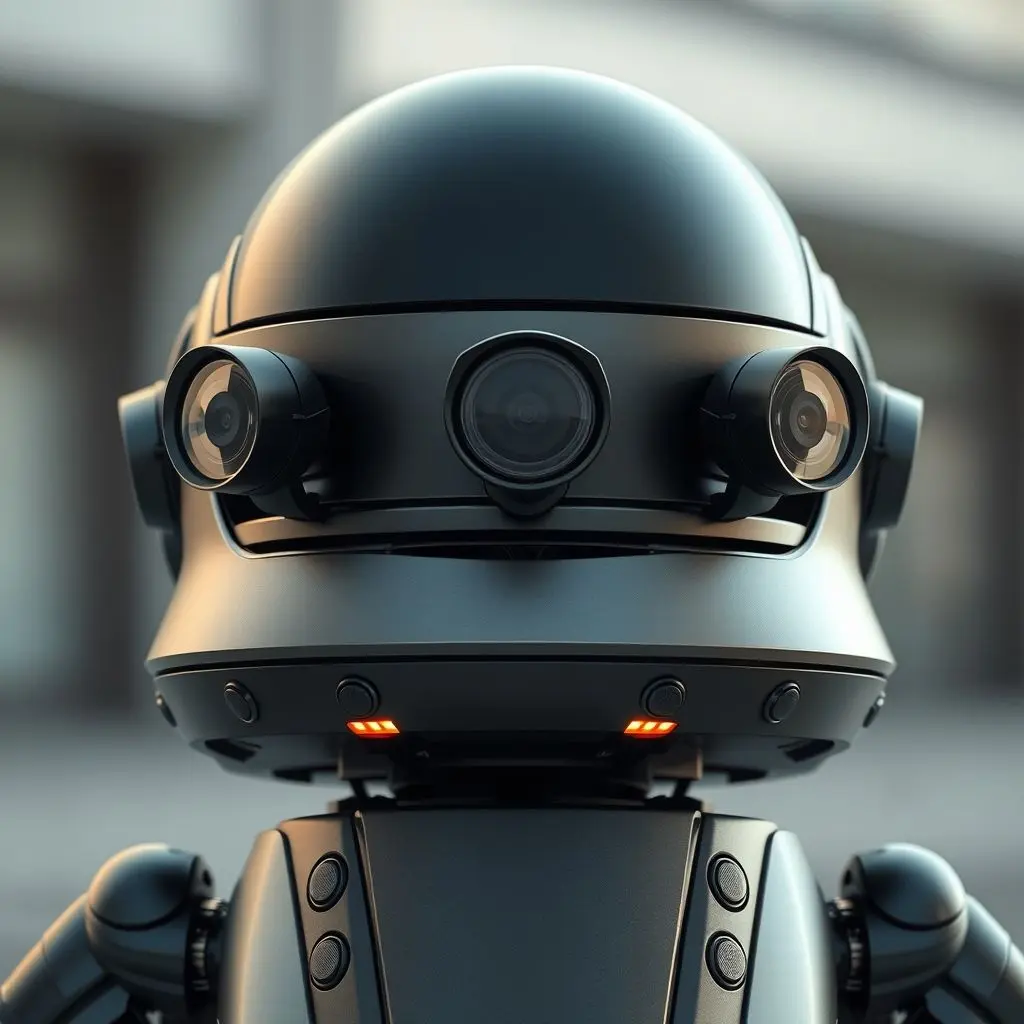

Picture this: a compact, friendly robot gliding effortlessly down a bustling sidewalk, meticulously dodging pedestrians, cyclists, and even that stray frisbee. It carries your piping hot pizza or your latest online haul with serene precision. Ever pause to marvel at this seamless dance? How do these mechanical couriers, seemingly with minds of their own, manage to avoid every potential bump, scrape, or collision in our unpredictable urban landscapes? It’s far from magic, dear reader. What you’re witnessing is a masterclass in modern robotics, a meticulously orchestrated ballet of advanced sensors, intelligent algorithms, and constant learning.

Much like a skilled dancer anticipating every move on a crowded floor, delivery robots employ a sophisticated symphony of technology to perceive, interpret, and navigate their surroundings. This isn’t just about moving from point A to point B; it’s about doing so safely, efficiently, and gracefully, all while adapting to a dynamically changing world. Let’s peel back the layers and discover the fascinating engineering that keeps these bots on the straight and narrow, free from unwanted encounters.

Before we dive deep into the tech, here’s a quick glimpse of how these marvels of engineering manage their intricate dance:

Table of Contents

The Sensory Arsenal: Eyes, Ears, and Feelers of a Robot

At the heart of any robot’s ability to avoid obstacles lies its comprehensive sensor suite. These are the ‘eyes’ and ‘ears’ that gather raw data from the environment, painting a rich, real-time picture of the world around them.

1. LiDAR: The Laser Eyes Mapping 3D Worlds

Imagine super-powered laser eyes that can map a 3D environment millimeter by millimeter – that’s essentially LiDAR (Light Detection and Ranging). This cutting-edge technology works by emitting pulses of laser light and measuring the time it takes for these pulses to return after hitting an object. By doing this thousands of times per second, a LiDAR sensor generates a ‘point cloud’ – a detailed 3D representation of the robot’s surroundings.

- How it Works: A spinning mirror inside the LiDAR unit sends out laser beams across a wide field of view. When these beams hit an object, they reflect back to a receiver. The travel time of the light allows the robot to calculate the precise distance to that object.

- Key Advantages:

- High Accuracy: Provides highly precise depth information, crucial for distinguishing between a curb and a pothole.

- 3D Mapping: Creates incredibly detailed three-dimensional maps of the environment.

- Works in Low Light: Unlike cameras, LiDAR performs exceptionally well in dim light or complete darkness, as it generates its own light.

- Considerations: While powerful, LiDAR can be costly and its performance can be somewhat affected by extreme weather conditions like heavy rain or dense fog, which can scatter the laser beams.

2. High-Resolution Cameras: Visual Perception for Object Recognition

If LiDAR provides the skeleton of the world, high-resolution cameras add the flesh and color. These are not just any cameras; they’re often paired with advanced computer vision algorithms, enabling the robot to ‘see’ and interpret its environment much like a human does.

- What They See: Cameras are vital for identifying traffic lights, reading street signs, recognizing different types of pedestrians, distinguishing between vehicles and static objects, and even spotting the subtle nuances like a rogue skateboard or a child’s toy left on the sidewalk.

- Computer Vision in Action: Powerful AI models process the visual feed in real-time. They can detect, classify, and track objects, estimate their speed and direction, and understand semantic information (e.g., distinguishing between a ‘tree’ and a ‘person’).

- Stereo Cameras for Depth: Many robots use stereo camera setups (two cameras placed side-by-side, mimicking human eyes) to calculate depth through triangulation, providing another layer of distance perception.

- Challenges: Camera performance can be heavily influenced by lighting conditions (bright sun glare, deep shadows, night-time), and objects can be obscured (occluded) by others.

3. Ultrasonic Sensors: The Close-Range Bumper Guards

For immediate, close-proximity detection, delivery robots often rely on ultrasonic sensors. These are the robot’s ‘feelers,’ guarding against bumping into very near objects that might be missed by other sensors due to blind spots or specific angles.

- How They Work: Ultrasonic sensors emit high-frequency sound waves and measure the time it takes for the echo to return. This ‘time-of-flight’ data allows them to calculate the distance to nearby objects.

- Benefits:

- Cost-Effective: Relatively inexpensive compared to LiDAR or advanced cameras.

- Excellent for Short Range: Ideal for detecting objects within a few feet, such as curbs, walls, or even the legs of a table in a tight spot.

- Reliable: Less affected by light conditions compared to cameras.

- Limitations: They have a limited range and a wider, less precise beam compared to lasers, making them unsuitable for long-range navigation but perfect for ‘bumper’ protection.

The Brain Behind the Brawn: AI and Navigation Software

Collecting data is one thing; making sense of it and acting upon it is another. This is where the robot’s artificial intelligence and sophisticated navigation software come into play – the ‘super-brain’ constantly calculating and recalculating.

1. Real-time Data Fusion: Building a Dynamic 3D Map

The first critical step is data fusion. Information from all sensors – LiDAR’s precise depth, camera’s detailed visuals, and ultrasonic’s close-range alerts – is combined and synthesized. This isn’t just layering data; it’s intricately merging it to create a coherent, comprehensive understanding of the environment.

- SLAM (Simultaneous Localization and Mapping): This foundational robotics algorithm is a game-changer. SLAM allows a robot to build a map of an unknown environment while simultaneously keeping track of its own location within that map. As the robot moves, it continuously updates its internal map, identifying new obstacles, marking clear paths, and refining its understanding of the world.

- Dynamic 3D Map: The output of this fusion is a constantly evolving 3D map. This map isn’t static; it recognizes that the world is in motion. It tracks the movement of cars, bicycles, and pedestrians, predicting their trajectories to anticipate potential conflicts.

2. Path Planning & Obstacle Avoidance Algorithms

With a clear understanding of its environment and its own position, the robot’s AI then springs into action to plan and execute its route.

- Global vs. Local Path Planning:

- Global Planning: Before starting a journey, the robot generates an overall optimal route from its starting point to its destination based on pre-existing maps and known information.

- Local Planning: This is where real-time obstacle avoidance shines. As the robot moves, its local path planner constantly analyzes the immediate surroundings, detecting unforeseen obstacles (a sudden pedestrian, a newly parked car, a fallen branch) and dynamically adjusting its path to steer clear.

- Predictive Modeling: The AI doesn’t just react; it anticipates. Using machine learning, it predicts the likely movement of dynamic obstacles. If a pedestrian is walking towards the robot, the AI will predict where they will be in the next few seconds and plan an evasive maneuver or a gentle stop accordingly.

- Collision Avoidance Techniques: Algorithms employ various strategies like ‘repulsion forces’ from obstacles, ‘attractive forces’ towards the goal, and ‘velocity obstacles’ (a method for predicting collisions and calculating safe velocities to avoid them).

- Safety Protocols: Integrated safety systems include emergency braking, pre-programmed evasive maneuvers, and safe stopping distances to ensure that even in unpredictable situations, the robot prioritizes safety.

3. Localization: Knowing Exactly Where They Are

Beyond mapping, a robot needs to know its precise location within that map. This is crucial for accurate navigation and successful delivery.

- GPS (Global Positioning System): Provides an initial, broad location. However, in urban areas with tall buildings (‘urban canyons’), GPS accuracy can be limited.

- Visual Odometry & Inertial Measurement Units (IMUs): These provide more precise self-localization. Visual odometry tracks the robot’s movement by analyzing successive camera images, while IMUs (accelerometers and gyroscopes) measure changes in orientation and acceleration. These are often fused with GPS and SLAM for robust positioning.

Learning and Adapting: The Evolution of Robot Navigation

The journey of a delivery robot is one of continuous learning. Each successful delivery, each avoided obstacle, feeds into a growing dataset that further refines its AI models.

- Continuous Learning: Through methods like reinforcement learning, robots get ‘smarter’ over time. They learn from their experiences, both successful and challenging, to improve their decision-making. Over-the-air updates ensure that an entire fleet can benefit from new lessons learned by a single bot.

- Dealing with the Unknown: While highly advanced, the real world is infinitely complex. Robots are designed with a degree of robustness to handle unforeseen scenarios or novel objects they haven’t been explicitly trained on. This often involves defaulting to safe behaviors, slowing down, or seeking human assistance.

- Redundancy: To enhance reliability, many critical systems have redundant sensors or software modules. If one sensor temporarily fails or is obscured, others can compensate, ensuring continuous operation and safety.

The Human Element & Safety First

Despite their autonomy, safety remains paramount. Delivery robots are designed with multiple layers of safety features.

- Remote Monitoring & Teleoperation: Many robot operations include a human oversight component. If a robot encounters a particularly complex or ambiguous situation it cannot resolve autonomously (e.g., a chaotic construction zone, a blocked path), it can flag for remote human intervention. A human operator can then guide the robot remotely or provide specific instructions.

- Safety Standards & Regulations: As these robots become more common, regulatory bodies are developing standards for their safe operation on public sidewalks and roads, covering aspects like speed limits, acoustic warnings, and interaction protocols.

- Interaction with Humans and Animals: Robots are programmed to prioritize the safety of humans and animals. They are often equipped with speakers to give audible warnings (e.g., “Excuse me, robot approaching”) and will stop or yield to pedestrians and pets.

Frequently Asked Questions About Robot Obstacle Avoidance

Q1: Are delivery robots safe around children and pets?

Absolutely. Safety is the top priority in their design. Robots are programmed to detect small, fast-moving objects like children and pets, and to react cautiously – typically by slowing down, stopping, or taking a wide berth. They are generally designed with low speeds and soft exteriors to minimize any potential harm, even in the unlikely event of a contact.

Q2: Can delivery robots operate in bad weather conditions like rain or snow?

Many modern delivery robots are designed to be weather-resistant. While heavy rain or snow can affect the performance of some sensors (especially cameras and LiDAR), most robots employ sensor redundancy and advanced algorithms to compensate. For instance, radar sensors, if included, excel in adverse weather where optical sensors might struggle. However, extreme conditions might lead to a robot suspending operations or requiring remote human assistance.

Q3: What happens if a robot’s sensor fails or gets damaged?

Delivery robots are built with redundancy in mind. If one sensor fails, the system is designed to rely on data from other working sensors to maintain navigation and safety. In critical sensor failures or if multiple systems are compromised, the robot is programmed to safely stop and alert its human operators for diagnosis or retrieval.

Q4: How accurate are the maps these robots use?

Incredibly accurate. Thanks to SLAM technology and continuous updates from their own experiences and fleet learning, these robots maintain highly detailed, dynamic 3D maps. They can localize themselves within centimeters of their actual position, ensuring precise navigation and pinpoint deliveries.

Q5: Are delivery robots fully autonomous, or do humans control them?

While the goal is increasing autonomy, most delivery robots operate on a spectrum. They are largely autonomous, handling routine navigation and obstacle avoidance on their own. However, they often have a human oversight component, where operators can remotely monitor their progress and intervene via teleoperation if the robot encounters a particularly challenging or ambiguous situation it cannot resolve independently.

The Future is Smooth Sailing

The seamless journey of a delivery robot is a testament to the remarkable advancements in robotics and artificial intelligence. What once seemed like science fiction is now a daily reality, powered by laser precision, keen visual perception, and a constantly learning digital brain. As these technologies continue to evolve, we can expect even smarter, more agile, and omnipresent autonomous couriers, further integrating into our daily lives and transforming urban logistics.

The intricate dance of sensors keen and code so grand, ensures they glide through city, street, and land. No obstacle too vast or small, a perfect path, avoiding all. If you found this byte of tech wisdom enlightening, don’t just scroll past! Hit that like button faster than a robot avoiding a lamppost, and subscribe to TechOTV so you don’t miss out on more mind-blowing tech truths. Let’s grow this tech-savvy squad!